Linking facial recognition of emotions and socially shared regulation in medical simulation

Abstract

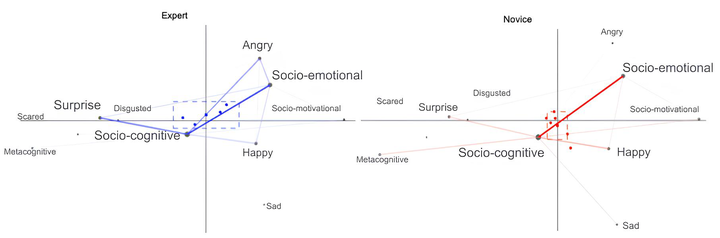

Computer-supported simulation enables a practical alternative for medical training purposes. This study investigates the co-occurrence of facial-recognition-derived emotions and socially shared regulation of learning (SSRL) interactions in a medical simulation training context. Using transmodal analysis (TMA), we compare novice and expert learners’ affective and cognitive engagement patterns during collaborative virtual diagnosis tasks. Results reveal that expert learners exhibit strong associations between socio-cognitive interactions and high-arousal emotions (surprise, anger), suggesting focused, effortful engagement. In contrast, novice learners demonstrate stronger links between socio-cognitive processes and happiness or sadness, with less coherent SSRL patterns, potentially indicating distraction or cognitive overload. Transmodal analysis of multimodal data (facial expressions and discourse) highlights distinct regulatory strategies between groups, offering methodological and practical insights for computer-supported cooperative work (CSCW) in medical education. Our findings underscore the role of emotion-regulation dynamics in collaborative expertise development and suggest the need for tailored scaffolding to support novice learners’ socio-cognitive and affective engagement.

Supplementary notes can be added here, including code, math, and images.